Check Plugin Documentation

Get started quickly with our comprehensive guides

About the plugin

How to limit the crawl rate of bots in a website

Increasing the volume of visits is one of the basic objectives of most websites. And in most cases, the greater number of visits comes from the main internet search engines, such as Google and Bing.

Indeed, for the pages in our site to appear in the search result pages of these search engines, they must have previously been added to their indexes.

To index a site, search engines run specialized applications commonly referred to as ‘bots’, ‘crawlers’ or ‘spiders’. These bots navigate the content of web sites, reading the content of the pages they find.

In principle, being actively crawled by different search engines it is a good signal for a site. But it may happen that the number of accesses done by crawlers becomes excessive, affecting the performance of the server, making it appear as slow or unresponsive to users. In this post we will review different possibilities to limit the crawl rate of the main internet search engines.

1. Identify which bots are crawling the site at an excessive crawl rate

The most straightforward way to evaluate the load imposed by bots on the server, is to analyze the content of the site’s access logs, and compute the total numbers of accesses done by different Users Agents, whose value includes the string ‘bot’, ‘spider’ or ‘crawler’.

To illustrate this post, we have analyzed the access log of one of our sites, with the following result:

- 28576 hits bingbot

- 14425 hits Baiduspider

- 12289 hits Mediapartners-Google (bot.html)

- 11514 hits Googlebot

- 204 hits www.proximic.com/info/spider.php

- 201 hits ia_archiver (crawler@alexa.com)

- 15 hits AhrefsBot

- 10 hits GrapeShotCrawler

- 10 hits msnbot

- 9 hits YandexBot

- 67253 hits TOTAL

As we can see, the load imposed by bots does not seem to be excessive in this case 67,253 total requests in one day gives an average of 0.78 requests/second, with most reasonably configured web servers should be able to handle.

Nevertheless, these requests consume CPU, RAM and bandwidth, and it is always worth knowing what are the possible ways to limit the crawl rate of the bots.

Robots.txt file

What are robots.txt files and what are they used for?

A robots.txt file is a text file that resides on your server. It contains rules for indexing your website and is a tool to directly communicate with search engines.

Basically, the file says which parts of your site Google’s allowed to index and which parts they should leave alone.

However, why would you ever tell Google not to crawl something on your site? Isn’t that harmful from an SEO perspective? There are actually many reasons why you would tell Google not to crawl something on your site.

One of the most common uses of robots.txt is excluding a website from the search results that is still in its development stage.

The same goes for a staging version of your site, where you try out changes before committing them to the live version.

Or, maybe you have some files on your server that you don’t want to pop up on the Internet because they are only for your users.

Basic robots.txt syntax

If you rolled your eyes at the word ‘syntax,’ don’t worry, you don’t have to learn a new programming language. Available commands for directives are few. In fact, knowing just two of them is enough for most purposes:

User-Agent ‘ Defines the search engine crawler

Disallow ‘ Tells the crawler to stay away from defined files, pages, or directories

If you are not going to set different rules for different crawlers or search engines, an asterisk (*) can be used to define universal directives for all of them. For example, to block everyone from your entire website, you would configure robots.txt in the following way:

User-agent: *

Disallow: /

User-agent: *

Disallow: /

This basically says that all directories are off limits for all search engines.

What’s important to note is that the file uses relative (and not absolute) paths. Since robots.txt resides in your root directory, the forward slash denotes a disallow for this location and everything it contains. To define single directories, such as your media folder, as off limits, you would have to write something like /wp-content/uploads/. Also keep in mind that paths are case sensitive.

If it makes sense for you, you can also allow and disallow parts of your site for certain bots. For example, the following code inside your robots.txt would give only Google full access to your website while keeping everyone else out:

User-agent: Googlebot

Disallow:

User-agent: *

Disallow: /

User-agent: Googlebot

Disallow:

User-agent: *

Disallow: /

Be aware that rules for specific crawlers have to be defined at the beginning of the robots.txt file. Afterwards you can include a User-agent:* wild card as a catch-all directive for all spiders that don’t have explicit rules.

Noteworthy names of user-agents include:

Googlebot ‘ Google

Googlebot-Image ‘ Google Image

Googlebot-News ‘ Google News

Bingbot ‘ Bing

Yahoo! Slurp ‘ Yahoo (great choice in name, Yahoo!)

Again, let me remind you that Google, Yahoo, Bing, and such will generally honor the directives in your file, however, not every crawler out there will.

The plugin helps you handle with ease the ‘bad bots’ that try to hack or spam on your website, besides having a whole bunch of features to help you in your work. These features include:

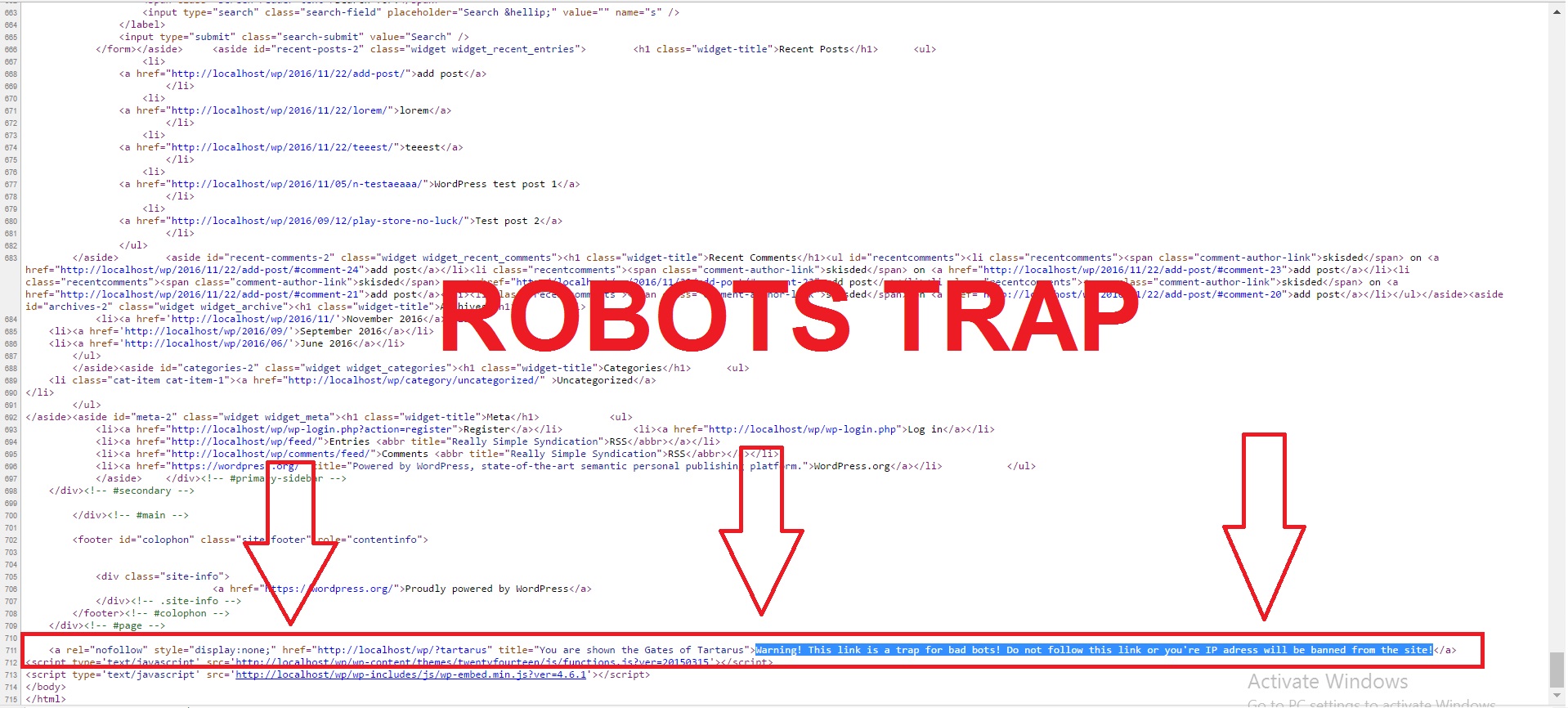

- Create a bot trap that will capture malicious robots that do not honor your robots.txt file

- You can define custom rules (with wildcard support!) that will ban robots and human users, based on their IP Adresses and User Agent strings

- Full modern browser support – Google Chrome, Firefox, IE, Edge, Opera, Safari

- Full modern webcrawlers support – Google, Bing, Yahoo, Yandex, Baidu, Facebook, Twitter

- Useful for a wide range of websites, including: blogs, subscription sites, single sites and others

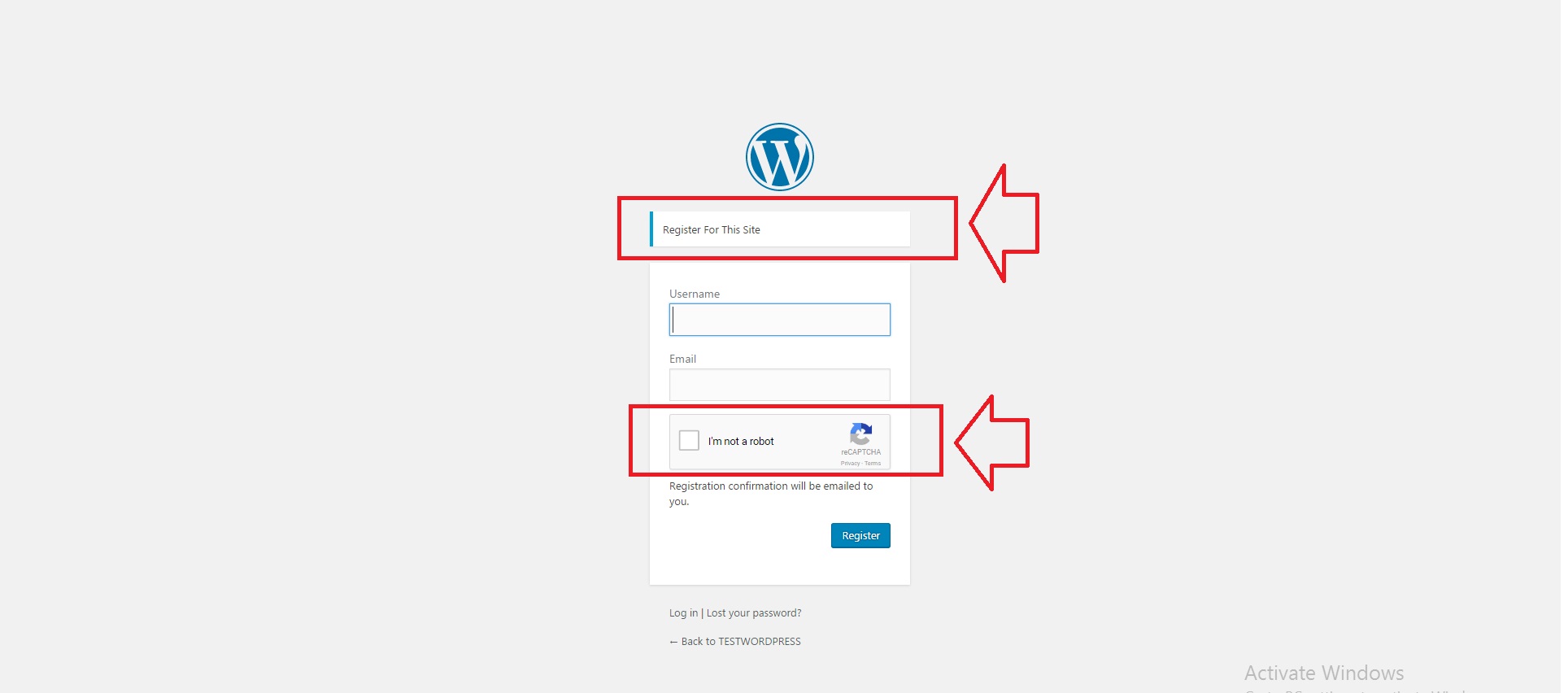

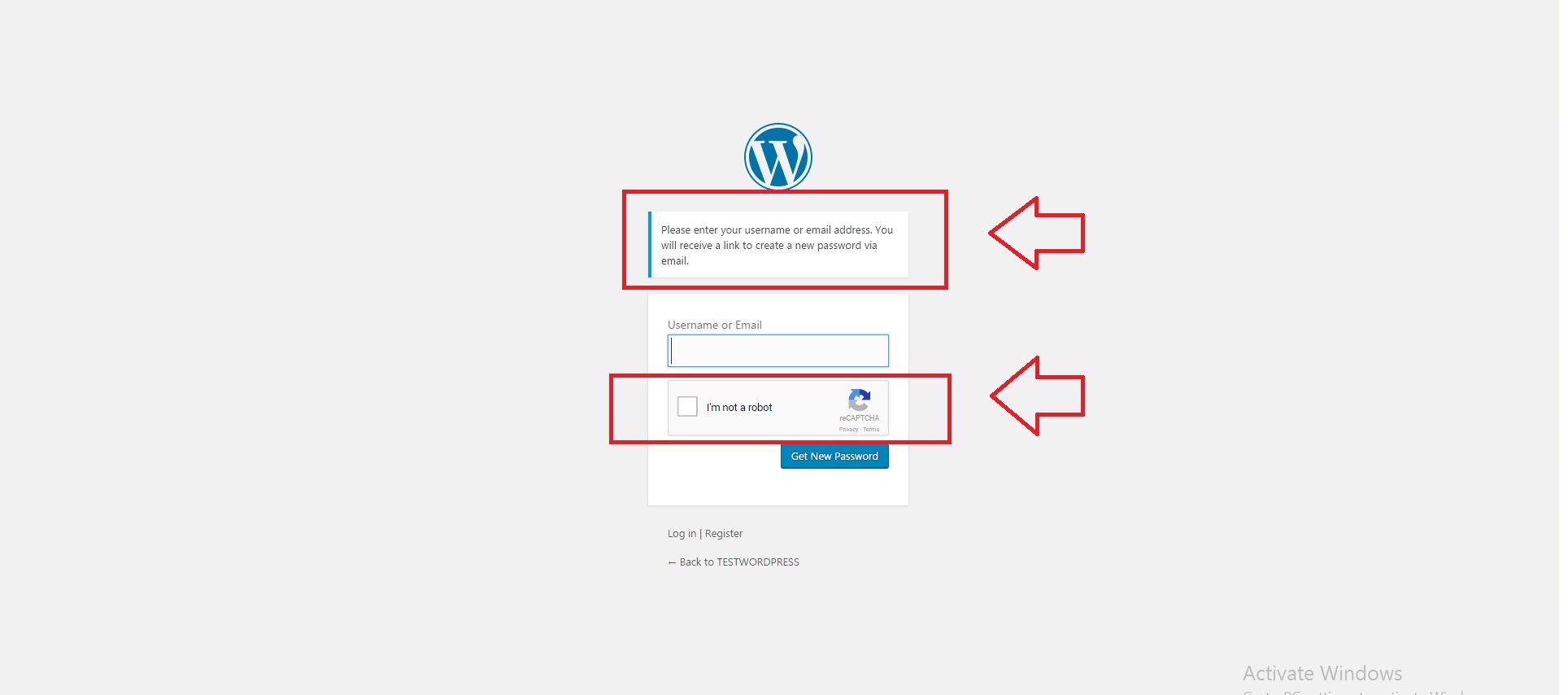

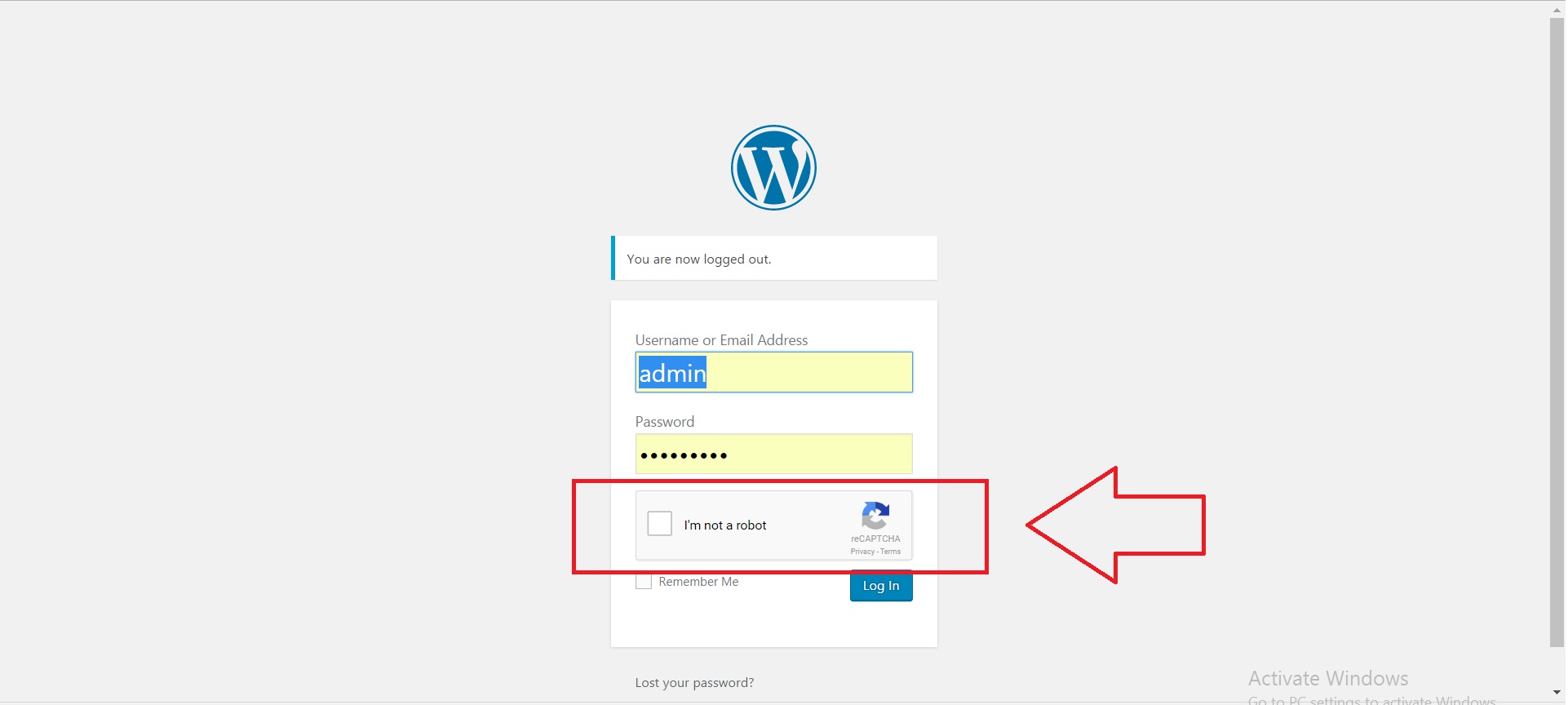

- Recaptcha integrition support in your comments, log-in, register and forgot password forms

- Custom rule to automatically ban known ‘good bots’

- Custom rule to automatically ban knwon ‘bad bots’ – plugin contains a list of over 1000 of these malicious bots

- Ability to log your website visitors, in different categories for human and robot visitors

- Ability to exclude a list of robot names, that will be always be allowed to fully crawl your website

- Ability to exclude a list of robot IP Adresses, that will be always be allowed to fully crawl your website

- Ability to customize the message shown to the banned bots

- Ability to set an alert to be sent by email to your custom email adress, every time a bot got trapped by this plugin

- Plugin will check if mail sending feature is active and working in your WordPress installation and if not, it will alert you

- Optimized for speed – no speed impact

- Ability to add a shortcode for securing your inpage email adress from email stealing bots (uses javascript)

- Adds a layer of protection by customizing your .htaccess file, by adding exclusions for a wide range of known malicious spiders and bots

- Ability to view a wide range of information about the banned bot, including IP Adress, User Agent, ban type, URL, query string, protocol and many others

- A built-in robots.txt editor, to full customize your robots.txt file

- A built-in .htaccess editor, to full customize your .htaccess file

- Full documentation and tutorial included about robots.txt, htaccess and many other stuff

- Ability to edit the meta tags that are shown in the page types on your website, including: NOINDEX, NOFOLLOW, NOODP and NOYDIR. Page types include: posts, pages, home page, category, archive, search, taxonomy, not found, tag and media.

- Ability to lift restrictions and allow banned users to access login and admin pages – just to be sure you do not lock yourself out of WordPress

- Bot Comment Spam Blocker – sets up a honeypot trap, that will capture malicious spamming bots and will ban them

- Referrer Spam Blocker – detect referral spam sources and block them – getting rid of spikes in traffic in your Google Analytics data

- Responsive design, fully mobile compatible

- Translations ready

- Most feature rich ‘Bot Banning and Crawl Control’ Plugin for WordPress on the market!

- Lifetime updates and support.

WordPress installation

Youtube video tutorial:

I also provided a Quick Install Guide to feature an easy plugin installation for everyone.

To install this plugin, first, you’ll need to install the plugin. The easiest method is to take the .zip file you’ve downloaded and upload it via Plugins > Add New > Upload Plugin in the WordPress Dashboard. Once the plugin is installed, be sure to Activate it.

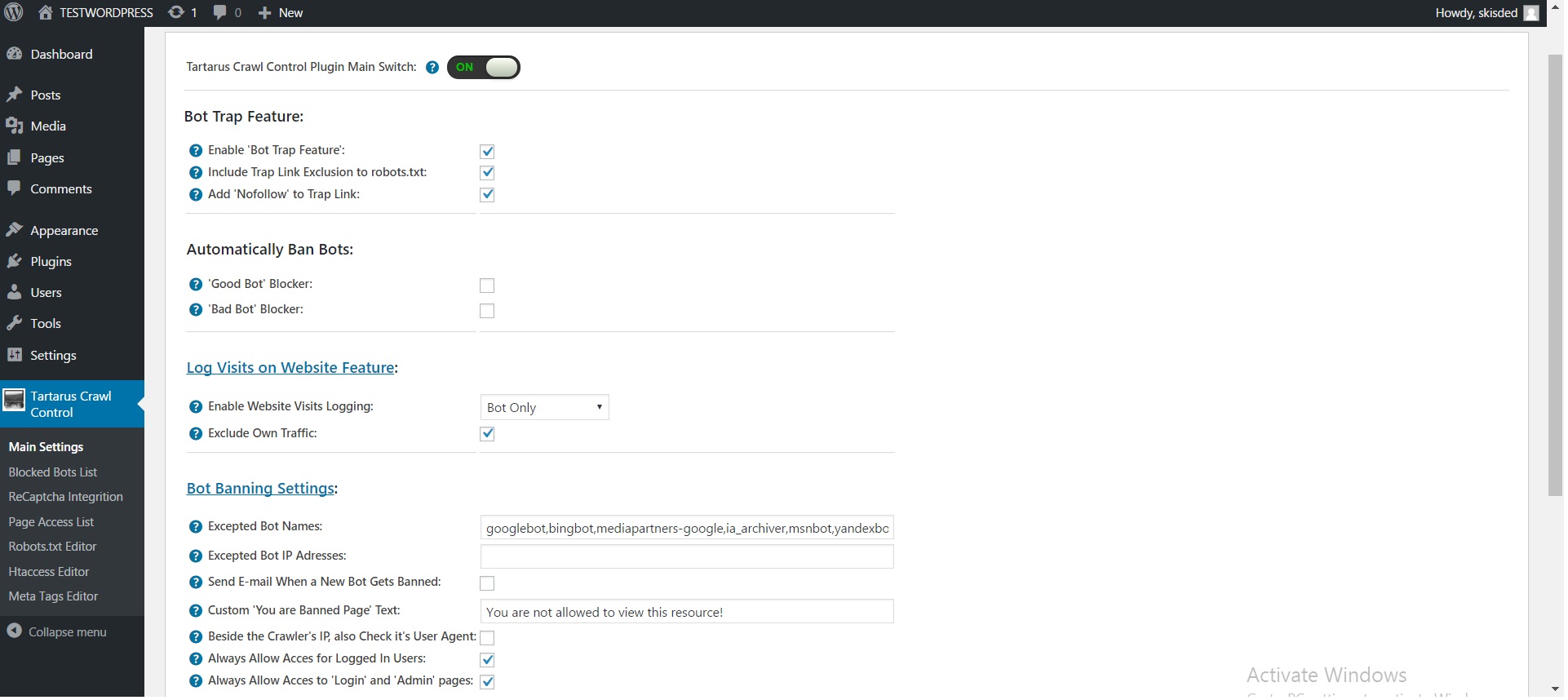

Now that you’ve installed and activated the plugin, you’ll see a new menu item created inside WordPress called ‘Tartarus Crawl Control’. First thing first, let’s head over to Settings > Tartarus Crawl Control and take a look at what options are available.

Plugin Settings

Refreshingly, Tartarus Crawl Control plugin has a super-simple settings screen. Let’s look at first at the settings panel:

Here you can find the steps needed in configuring your plugin even if you have no HTML knowledge at all. You can find options for:

HINT! Don’t forget to click the Save button every time you modified your settings, otherwise the modifications will be lost!

Main Settings:

- Tartarus Crawl Control Plugin Main Switch: Enable or disable the Tartarus Crawl Control Plugin. This acts like a main switch.

- Enable ‘Bot Trap Feature’: Do you want to activate the bot trap feature of this plugin? This feature will add an invisible link (but bots can still see it) to your website. Anyone who follows that link, will be banned from your website.

- Include Trap Link Exclusion to robots.txt: This feature will add an entry to your robots.txt file that tells the crawling spiders not to follow the tartarus trap link. If they will understand and follow this directive, they will not be caught in the bot trap. However, you may chose not to include this directive in your robots.txt file, and to catch and ban every robot that crawls your website.

- Add ‘Nofollow’ to Trap Link: This feature will add ‘NOFOLLOW’ directive to the link of the bot trap. It is highly recommended that you enable this feature (and also the ‘Include Trap Link Exclusion to robots.txt’ feature), to not ban innocent bots (that follow the robots.txt rules).

- ‘Good Bot’ Blocker: This feature detects and bans the major crawling bots (Google, Bing, Yahoo, etc.). Note that if you enable this, the SEO score of your website will be seriously affected! Use this only if your website suffers from massive slowdown from massive crawling. For a full list of blocked and allowed bots and spiders, please consult the plugin documentation.

- ‘Bad Bot’ Blocker: This feature blocks a large number of bots that are known to be aggresive, except the major ones that will benefit your website SEO (Google, Bing, Yahoo). For a full list of blocked and allowed bots and spiders, please consult the plugin documentation. This feature can improve your website’s performance.

- Extra ‘Good Bot’ Names to Ban: Add bot names that you consider are good bots. Notice that these bots will be automatically banned on access. You can check the initial good bot names in the plugin documentation. Add names separated by a comma.

- Extra ‘Bad Bot’ Names: Add bot names that you consider are bad bots. Note that they will be automatically banned on access. You can check the initial bad bot names in the plugin documentation. Add names separated by a comma.

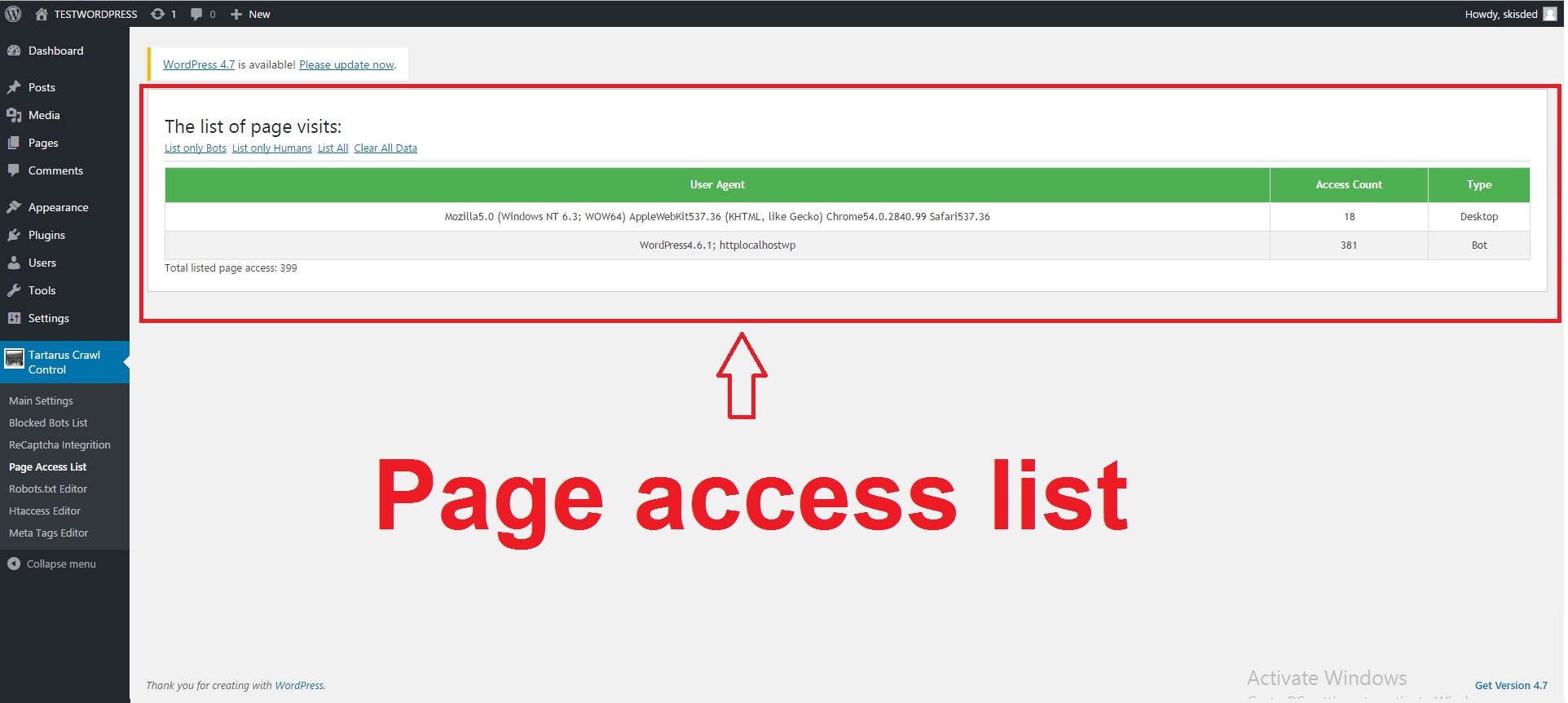

- Enable Website Visits Logging: This feature will log visits on your website. You can choose to log only bots, only humans or both.

- Exclude Own Traffic: Do you want to exclude the traffic comming from your own IP Adress? This feature is useful to get more precise results.

- Excepted Bot Names: The names of the bots who will be permitted to crawl your site in any conditions. Some known bot type are excepted by default. Note that if you want to define more bot names, you should enter them all here, separated by comma. Also note that wildcard expressions or regexp are also supported.

- Excepted Bot IP Adresses: The IP Adresses of the bots that will be permitted to crawl your site in any conditions. Note that if you want to define more bot IP Adresses, you should enter them all here, separated by comma. Also note that wildcard expressions or regexp are also supported.

- Send E-mail When a New Bot Gets Banned: Do you what to receive an e-mail every time a new bot gets banned from your website?

- Your E-mail Adress: The e-mail address where the e-mail should be sent. Note that this plugin will automatically detect and warn you, if e-mail sending does not function on your WordPress installation.

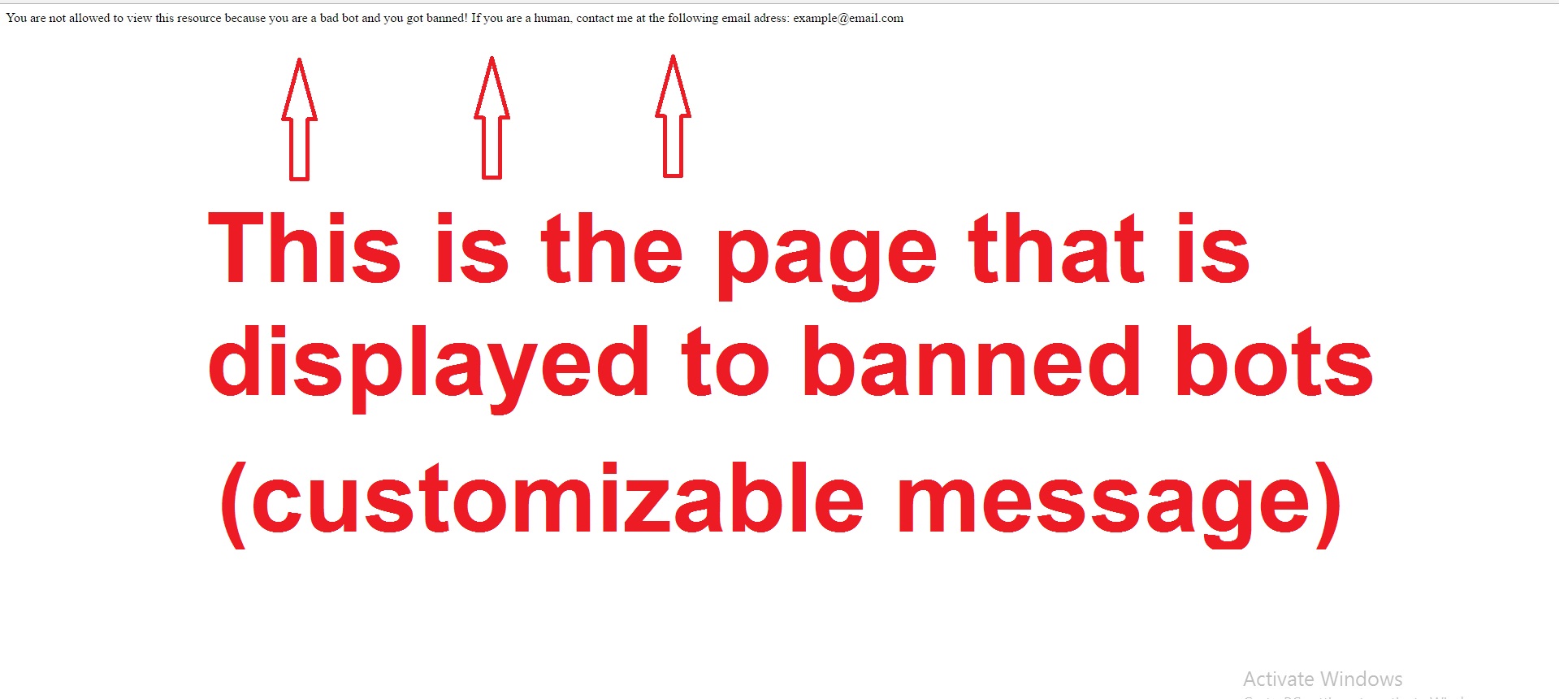

- Custom ‘You are Banned Page’ Text: The text to be shown to the banned bots. You can customize this text for human visitors who are curious and follow this link.

- Beside the Crawler’s IP, also Check it’s User Agent: Do you want to check both IP Adress and User Agent of visiting bots? If you uncheck this, ban will be given only for the IP Adress of the visitor. If you check this, ban will be given for the IP Adress – User-Agent combination. So, if this is checked, the user or the bot can change it’s user agent (or browser), and the ban will not apply.

- Always Allow Acces for Logged In Users: Allow access to site for logged in users even if they are banned. (Warning! Users will be banned as soon as they log out!)

- Always Allow Acces to ‘Login’ and ‘Admin’ pages: Allow access to login and admin pages even if user is banned. Warning! If you deny access for yourself to login and admin pages, you risk locking yourself out of WordPress! Use this settings with caution!

- ‘Referral Spam’ Blocker: This feature blocks all spam comming from refferal sites that are known to spam. A full list of these site, can be found in the plugin documentation.

- Referral Spam Extra URLs: Add URLs that you consider are referral spam sources. You can check the initial referral spam sources in the plugin documentation. Add sources separated by a comma.

- ‘Bot Comment Spam’ Blocker: This feature blocks all spam comming from commenting spam bots. It uses a honeypot method to achieve this.

- ‘Bot Comment Spam’ Blocker Text to be Shown: The text to be shown to the spamming bots.

- ‘Bot Comment Spam’ Also Ban Bot IP: Do you also want to ban IP Adresses that try to spam in your comment section? If you uncheck this, spam will only be blocked, but bot’s IP adresses will not be banned.

- Block Known ‘Bad Bots’ with the .htaccess File: Do you also want to block some of the known bad bots, by using your .htaccess file? List of blocked bots can be found in the plugin documentation.

Blocked Bots List:

- Add a manual rule: Here you can add a manual rule that will apply on your website. Note that you can block access of specific IPs or specific User Agents (browsers or bots). Also note that you can use regular expressions here. For more information, check the plugin’s documentation file.

- New IP Address: Enter the IP adress that you want to ban. Note that wildcards are supported!

- New User Agent: Enter the User Agent that you want to ban. Note that wildcards are supported!

- Delete column: Clicking on the ‘x’ in this column will delete the respective entry.

- Date column: Here will be listed the date when the ban was issued. This column is sortable, if you click on it’s header.

- IP Address column: In this column you can see listed the ip adresses that are banned. This column is sortable, if you click on it’s header.

- User Agent column: Here you can see listed the User Agent strings that are banned. This column is sortable, if you click on it’s header.

- Ban Reason column: Here you can see listed the ban reasons for every ban entry that are banned. This column is sortable, if you click on it’s header.

- More Info column: Here you can see listed a link which will lead you to more information about the respective ban entry.

Recaptcha Integrition:

- Enable ReCaptch Feature: Enable or disable ReCaptcha feature of this plugin.

- Site Key: Please register your blog through the Google reCAPTCHA admin page and enter the site key in the fields below. More help.

Page Access List:

Robots.txt Editor

- ROBOTS.TXT Web site owners use the /robots.txt file to give instructions about their site to web robots; this is called The Robots Exclusion Protocol. Change this only if you know what you are doing!

- Submit button After you are done making your changes, click this button to save the file

- Reset button Click this button to restore the original version of robots.txt file.

.htaccess Editor

- .htaccess .htaccess is a configuration file for use on web servers running the Apache Web Server software. When a .htaccess file is placed in a directory which is in turn ‘loaded via the Apache Web Server’, then the .htaccess file is detected and executed by the Apache Web Server software.. Change this only if you know what you are doing!

- Submit button After you are done making your changes, click this button to save the file

- Reset button Click this button to restore the original version of .htaccess file.

Meta Tags Editor

- Meta Tags Settings: Do you want to enable meta tags to tell bots your indexing preference? For more info about this feature, check plugin documentation.

- NOINDEX: You can add this to your robots.txt meta tag in: posts, pages, category, archive, search, taxonomy, not found, home, tag and media pages. NOINDEX is a meta tag that can be displayed to search robots, that requests them not to index content. The specific directive differs between each search robot. The default behaviour is ‘Do not show this page in search results and do not show a ‘Cached’ link in search results’.

- NOFOLLOW: You can add this to your robots.txt meta tag in: posts, pages, category, archive, search, taxonomy, not found, home, tag and media pages. NOFOLLOW is a meta tag that can be displayed to search robots that requests them not to follow any links in the content. This can be especially useful when either you do not want search engines to follow links to certain content on your site, or more importantly when you do not want search engines to follow outbound links to other people’s websites.

- NOODP:: You can add this to your robots.txt meta tag in: posts, pages, category, archive, search, taxonomy, not found, home, tag and media pages. NOODP is a meta tag that tells search engines such as Google not to use the Open Directory Project as a source for generating titles and descriptions in search results.

- NOYDIR:: You can add this to your robots.txt meta tag in: posts, pages, category, archive, search, taxonomy, not found, home, tag and media pages. NOYDIR is a meta tag that tells search engines such as Google not to use the Yahoo! Directory as a source for generating titles and descriptions in search results.

Note that for tha manual ban adding feature (in the ‘Blocked Bots List:’ page), wildcards are also supported. If you are not sure what wildcards are and how they work, I provided a Guide where I explain the mechanism of wildcards.

Which bots are included in the ‘Good Bots’ List of this plugin?

twitterbot, slurp, ia_archiver, facebookexternalhit, facebot, googlebot, mediapartners, adsbot, Yahoo, baidu, yandex, yahooseeker, yahoobot, msnbot, watchmouse, pingdom, feedfetcher-google, bingbot, bingpreview

Which bots are included in the ‘Bad Bots’ List of this plugin?

bot, SiteLockSpider, crawler, archiver, transcoder, spider, uptime, validator, fetcher, MJ12bot, Ezooms, AhrefsBot, FHscan, Acunetix, Zyborg, ZmEu, Zeus, Xenu, Xaldon, WWW-Collector-E, WWWOFFLE, WISENutbot, Widow, Whacker, WebZIP, WebWhacker, WebStripper, Webster, Website Quester, Website eXtractor, WebSauger, WebReaper, WebmasterWorldForumBot, WebLeacher, Web.Image.Collector, WebGo IS, WebFetch, WebEnhancer, WebEMailExtrac, WebCopier, Webclipping.com, WebBandit, WebAuto, Web Sucker, Web Image Collector, VoidEYE, VCI, Vacuum, URLy.Warning, TurnitinBot, turingos, True_Robot, Titan, TightTwatBot, TheNomad, The.Intraformant, TurnitinBot/1.5, Telesoft, Teleport, tAkeOut, Szukacz/1.4, suzuran, Surfbot, SuperHTTP, SuperBot, Sucker, Stripper, Sqworm, spanner, SpankBot, SpaceBison, sogou, Snoopy, Snapbot, Snake, SmartDownload, SlySearch, SiteSnagger, Siphon, RMA, RepoMonkey, ReGet, Recorder, Reaper, RealDownload, QueryN.Metasearch, Pump, psbot, ProWebWalker, ProPowerBot/2.14, Pockey, PHP version tracker, pcBrowser, pavuk, Papa Foto, PageGrabber, OutfoxBot, Openfind, Offline Navigator, Offline Explorer, Octopus, NPbot, Ninja, NimbleCrawler, niki-bot, NICErsPRO, NG, NextGenSearchBot, NetZIP, Net Vampire, NetSpider, NetMechanic, Netcraft, NetAnts, NearSite, Navroad, NAMEPROTECT, Mozilla.*NEWT, Mozilla/3.Mozilla/2.01, moget, Mister PiX, Missigua Locator, Mirror, MIIxpc, MIDown tool, Microsoft URL Control, Microsoft.URL, Memo, Mata.Hari, Mass Downloader, MarkWatch, Mag-Net, Magnet, LWP::Simple, lwp-trivial, LinkWalker, LNSpiderguy, LinkScan/8.1a.Unix, LinkextractorPro, likse, libWeb/clsHTTP, lftp, LexiBot, larbin, Key2.Density, Kenjin.Spider, Jyxobot, JustView, JOC, JetCar, JennyBot, Jakarta, Iria, Internet Ninja, InterGET, Intelliseek, InfoTekies, InfoNaviRobot, Indy Library, Image Sucker, Image Stripper, IlseBot, humanlinks, HTTrack, HMView, hloader, Harvest, Grafula, GrabNet, gotit, Go-Ahead-Got-It, FrontPage, flunky, Foobot, EyeNetIE, Extractor, Express WebPictures, Exabot, EroCrawler, EmailWolf, EmailSiphon, EmailCollector, EirGrabber, ebingbong, EasyDL, eCatch, Drip, dragonfly, Download Wonder, Download Devil, Download Demon, DittoSpyder, DIIbot, DISCo, AIBOT, Aboundex, Custo, Crescent, cosmos, CopyRightCheck, Copier, Collector, ChinaClaw, CherryPicker, CheeseBot, Cegbfeieh, BunnySlippers, Bullseye, BuiltBotTough, Buddy, BotALot, BlowFish, BlackWidow, Black.Hole, Bigfoot, BatchFTP, Bandit, BackWeb, BackDoorBot, 80legs, 360Spider, Java, Cogentbot, Alexibot, asterias, attach, eStyle, WebCrawler, Dumbot, CrocCrawler, ASPSeek, AcoiRobot, DuckDuckBot, BLEXBot, Ips Agent, bot, crawl, spider, outbrain, 008, 192.comAgent, 2ip.ru, 404checker, ^bluefish , ^FDM , ^git, ^Goose, ^HTTPClient, ^Java, ^Jetty, ^Mget, ^Microsoft URL Control, ^NG/[0-9.], ^NING, ^PHP/[0-9], ^RMA, ^Ruby, Ruby/[0-9], ^scrutiny, ^VSE/[0-9], ^WordPress.com, ^XRL/[0-9], a3logics.in, A6-Indexer, a.pr-cy.ru, Aboundex, aboutthedomain, Accoona, acoon, acrylicapps.com/pulp, adbeat, AddThis, ADmantX, adressendeutschland, Advanced Email Extractor v, agentslug, AHC, aihit, aiohttp, Airmail, akula, alertra, alexa site audit, Alibaba.Security.Heimdall, alyze.info, amagit, AndroidDownloadManager, Anemone, Ant.com, Anturis Agent, AnyEvent-HTTP, Apache-HttpClient, AportWorm/[0-9], AppEngine-Google, Arachmo, arachnode, Arachnophilia, archive-com, aria2, asafaweb.com, AskQuickly, Astute, asynchttp, autocite, Autonomy, B-l-i-t-z-B-O-T, Backlink-Ceck.de, Bad-Neighborhood, baypup/[0-9], baypup/colbert, BazQux, BCKLINKS, BDFetch, BegunAdvertising, bibnum.bnf, BigBozz, biglotron, BingLocalSearch, binlar, biz_Directory, Blackboard Safeassign, Bloglovin, BlogPulseLive, BlogSearch, Blogtrottr, boitho.com-dc, BPImageWalker, Braintree-Webhooks, Branch Metrics API, Branch-Passthrough, Browsershots, BUbiNG, Butterfly, BuzzSumo, CakePHP, CapsuleChecker, CaretNail, cb crawl, CC Metadata Scaper, Cerberian Drtrs, CERT.at-Statistics-Survey, cg-eye, changedetection, Charlotte, CheckHost, chkme.com, CirrusExplorer, CISPA Vulnerability Notification, CJNetworkQuality, clips.ua.ac.be, Cloud mapping experiment, CloudFlare-AlwaysOnline, Cloudinary/[0-9], cmcm.com, coccoc, CommaFeed, Commons-HttpClient, Comodo SSL Checker, contactbigdatafr, convera, copyright sheriff, cosmos/[0-9], Covario-IDS, CrawlForMe/[0-9], cron-job.org, Crowsnest, curb, Curious George, curl, cuwhois/[0-9], CyberPatrol, cybo.com, DareBoost, DataparkSearch, dataprovider, Daum(oa)?[ /][0-9], DeuSu, developers.google.com/+/web/snippet, Digg, Dispatch, dlvr, DNS-Tools Header-Analyzer, DNSPod-reporting, docoloc, DomainAppender, dotSemantic, downforeveryoneorjustme, downnotifier.com, DowntimeDetector, Dragonfly File Reader, drupact, Drupal (+http://drupal.org/), dubaiindex, EARTHCOM, Easy-Thumb, ec2linkfinder, eCairn-Grabber, ECCP, ElectricMonk, elefent, EMail Exractor, EmailWolf, Embed PHP Library, Embedly, europarchive.org, evc-batch/[0-9], EventMachine HttpClient, Evidon, Evrinid, ExactSearch, ExaleadCloudview, Excel, Exploratodo, ezooms, facebookplatform, fairshare, Faraday v, Faveeo, Favicon downloader, FavOrg, Feed Wrangler, Feedbin, FeedBooster, FeedBucket, FeedBurner, FeedChecker, Feedly, Feedspot, feeltiptop, Fetch API, Fetch/[0-9], Fever/[0-9], findlink, findthatfile, Flamingo_SearchEngine, FlipboardBrowserProxy, FlipboardProxy, FlipboardRSS, fluffy, flynxapp, forensiq, FoundSeoTool/[0-9], free thumbnails, FreeWebMonitoring SiteChecker, Funnelback, g00g1e.net, GAChecker, geek-tools, Genderanalyzer, Genieo, GentleSource, GetLinkInfo, getprismatic.com, GetURLInfo/[0-9], GigablastOpenSource, Go [d.]* package http, Go-http-client, GomezAgent, gooblog, Goodzer/[0-9], Google favicon, Google Keyword Suggestion, Google Keyword Tool, Google Page Speed Insights, Google PP Default, Google Search Console, Google Web Preview, Google-Adwords, Google-Apps-Script, Google-Calendar-Importer, Google-HTTP-Java-Client, Google-Publisher-Plugin, Google-SearchByImage, Google-Site-Verification, Google-Structured-Data-Testing-Tool, google_partner_monitoring, GoogleDocs, GoogleHC, GoogleProducer, GoScraper, GoSpotCheck, GoSquared-Status-Checker, gosquared-thumbnailer, GotSiteMonitor, Grammarly, grouphigh, grub-client, GTmetrix, gvfs, HAA(A)?RTLAND http client, Hatena, hawkReader, HEADMasterSEO, HeartRails_Capture, heritrix, hledejLevne.cz/[0-9], Holmes, HootSuite Image proxy, Hootsuite-WebFeed/[0-9], HostTracker, ht://check, htdig, HTMLParser, HTTP-Header-Abfrage, http-kit, HTTP-Tiny, HTTP_Compression_Test, http_request2, http_requester, HttpComponents, httphr, HTTPMon, httpscheck, httpssites_power, httpunit, HttpUrlConnection, httrack, hosterstats, huaweisymantec, HubPages.*crawlingpolicy, HubSpot Connect, HubSpot Marketing Grader, HyperZbozi.cz Feeder, ichiro, IdeelaborPlagiaat, IDG Twitter Links Resolver, IDwhois/[0-9], Iframely, igdeSpyder, IlTrovatore, ImageEngine, Imagga, InAGist, inbound.li parser, InDesign, infegy, infohelfer, InfoWizards Reciprocal Link System PRO, inpwrd.com, Integrity, integromedb, internet_archive, InternetSeer, internetVista monitor, IODC, IOI, ips-agent, iqdb, Irokez, isitup.org, iskanie, iZSearch, janforman, Jigsaw, Jobboerse, jobo, Jobrapido, KeepRight OpenStreetMap Checker, KickFire, KimonoLabs, knows.is, kouio, KrOWLer, kulturarw3, KumKie, L.webis, Larbin, LayeredExtractor, LibVLC, libwww, Liferea, link checker, Link Valet, link_thumbnailer, linkCheck, linkdex, LinkExaminer, linkfluence, linkpeek, LinkTiger, LinkWalker, Lipperhey, livedoor ScreenShot, LoadImpactPageAnalyzer, LoadImpactRload, LongURL API, looksystems.net, ltx71, lwp-trivial, lycos, LYT.SR, mabontland, MagpieRSS, Mail.Ru, MailChimp.com, Mandrill, marketinggrader, MegaIndex.ru, Melvil Rawi, MergeFlow-PageReader, MetaInspector, Metaspinner, MetaURI, Microsearch, Microsoft Office , Microsoft Windows Network Diagnostics, Mindjet, Miniflux, Mnogosearch, mogimogi, Mojolicious (Perl), monitis, Monitority/[0-9], montastic, MonTools, Moreover, Morning Paper, mowser, Mrcgiguy, mShots, MVAClient, nagios, Najdi.si, NETCRAFT, NetLyzer FastProbe, netresearch, NetShelter ContentScan, NetTrack, Netvibes, Neustar WPM, NeutrinoAPI, NewsBlur .*Finder, NewsGator, newsme, newspaper, NG-Search, nineconnections.com, NLNZ_IAHarvester, Nmap Scripting Engine, node-superagent, node.io, nominet.org.uk, Norton-Safeweb, Notifixious, notifyninja, nuhk, nutch, Nuzzel, nWormFeedFinder, Nymesis, Ocelli/[0-9], oegp, okhttp, Omea Reader, omgili, Online Domain Tools, OpenCalaisSemanticProxy, Openstat, OpenVAS, Optimizer, Orbiter, OrgProbe/[0-9], ow.ly, ownCloud News, Page Analyzer, Page Valet, page2rss, page_verifier, PagePeeker, Pagespeed/[0-9], Panopta, panscient, parsijoo, PayPal IPN, Pcore-HTTP, Pearltrees, peerindex, Peew, PhantomJS, Photon, phpcrawl, phpservermon, Pi-Monster, Pingoscope, PingSpot, Pinterest, Pizilla, Ploetz + Zeller, Plukkie, PocketParser, Pompos, Porkbun, Port Monitor, postano, PostPost, postrank, PowerPoint, Priceonomics Analysis Engine, Prlog, probethenet, Project 25499, Promotion_Tools_www.searchenginepromotionhelp.com, prospectb2b, Protopage, proximic, PTST , PTST/[0-9]+, Pulsepoint XT3 web scraper, Python-httplib2, python-requests, Python-urllib, Qirina Hurdler, Qseero, Qualidator.com SiteAnalyzer, Quora Link Preview, Qwantify, Radian6, RankSonicSiteAuditor, Readability, RealPlayer%20Downloader, RebelMouse, redback, Redirect Checker Tool, ReederForMac, request.js, ResponseCodeTest/[0-9], RestSharp, RetrevoPageAnalyzer, Riddler, Rival IQ, Robosourcer, Robozilla/[0-9], ROI Hunter, SalesIntelligent, SauceNAO, SBIder, Scoop, scooter, ScoutJet, ScoutURLMonitor, Scrapy, Scrubby, SearchSight, semanticdiscovery, semanticjuice, SEO Browser, Seo Servis, seo-nastroj.cz, Seobility, SEOCentro, SeoCheck, SeopultContentAnalyzer, SEOstats, Server Density Service Monitoring, servernfo.com, Seznam screenshot-generator, Shelob, Shoppimon Analyzer, ShoppimonAgent/[0-9], ShopWiki, ShortLinkTranslate, shrinktheweb, SilverReader, SimplePie, SimplyFast, Site-Shot, Site24x7, SiteBar, SiteCondor, siteexplorer.info, SiteGuardian, Siteimprove.com, Sitemap(s)? Generator, Siteshooter B0t, SiteTruth, sitexy.com, SkypeUriPreview, slider.com, SMRF URL Expander, SMUrlExpander, Snappy, SniffRSS, sniptracker, Snoopy, SortSite, spaziodati, Specificfeeds, speedy, SPEng, Spinn3r, spray-can, Sprinklr , spyonweb, Sqworm, SSL Labs, Rambler, Statastico, StatusCake, Stratagems Kumo, Stroke.cz, StudioFACA, suchen, summify, Super Monitoring, Surphace Scout, SwiteScraper, Symfony2 BrowserKit, SynHttpClient-Built, Sysomos, T0PHackTeam, Tarantula, teoma, terrainformatica.com, The Expert HTML Source Viewer, theinternetrules, theoldreader.com, Thumbshots, ThumbSniper, TinEye, Tiny Tiny RSS, topster, touche.com, Traackr.com, truwoGPS, tweetedtimes.com, Tweetminster, Twikle, Twingly, Typhoeus, ubermetrics-technologies, uclassify, UdmSearch, UnwindFetchor, updated, Upflow, URLChecker, URLitor.com, urlresolver, Urlstat, UrlTrends Ranking Updater, Vagabondo, via ggpht.com GoogleImageProxy, visionutils, vkShare, voltron, Vortex/[0-9], voyager, VSAgent/[0-9], VSB-TUO/[0-9], VYU2, w3af.org, W3C-checklink, W3C-mobileOK, W3C_I18n-Checker, W3C_Unicorn, wangling, Wappalyzer, WbSrch, web-capture.net, Web-Monitoring, Web-sniffer, Webauskunft, WebCapture, webcollage, WebCookies, WebCorp, WebDoc, WebFetch, WebImages, WebIndex, webkit2png, webmastercoffee, webmon , webscreenie, Webshot, Website Analyzer, websitepulse[+ ]checker, Websnapr, Websquash.com, Webthumb/[0-9], WebThumbnail, WeCrawlForThePeace, WeLikeLinks, WEPA, WeSEE, wf84, wget, WhatsApp, WhatsMyIP, WhatWeb, Whibse, Whynder Magnet, Windows-RSS-Platform, WinHttpRequest, wkhtmlto, wmtips, Woko, WomlpeFactory, Word, WordPress, wotbox, WP Engine Install Performance API, WPScan, wscheck, WWW-Mechanize, www.monitor.us, XaxisSemanticsClassifier, Xenu Link Sleuth, XING-contenttabreceiver/[0-9], XmlSitemapGenerator, xpymep([0-9]?).exe, Y!J-(ASR, BSC), Yaanb, yacy, Yahoo Ad monitoring, Yahoo Link Preview, YahooCacheSystem, YahooYSMcm, YandeG, yanga, yeti, Yo-yo, Yoleo Consumer, yoogliFetchAgent, YottaaMonitor, yourls.org, Zao, Zemanta Aggregator, Zend\\Http\\Client, Zend_Http_Client, zgrab, ZnajdzFoto, ZyBorg

Which bots are included in the ‘Bad Bots’ List using HTACCESS blocking of this plugin?

aesop_com_spiderman, alexibot, backweb, bandit, batchftp, bigfoot, black.?hole, blackwidow, blowfish, botalot, buddy, builtbottough, bullseye, capture, fetch, finder, harvest, Java, larbin, libww, library, link, nutch, Retrieve, cheesebot, cherrypicker, chinaclaw, collector, copier, copyrightcheck, crawlcosmos, crescent, curl, custo, da, diibot, disco, dittospyder, dragonfly, drip, easydl, ebingbong, ecatch, eirgrabber, emailcollector, emailsiphon, emailwolf, erocrawler, exabot, eyenetie, filehound, flashget, flunky, frontpage, getright, getweb, go.?zilla, go-ahead-got-it, gotit, grabnet, grafula, harvest, hloader, hmview, httplib, httrack, humanlinks, ilsebot, infonavirobot, infotekies, intelliseek, interget, iria, jennybot, jetcar, joc, justview, jyxobot, kenjin, keyword, larbin, leechftp, lexibot, lftp, libweb, likse, linkscan, linkwalker, lnspiderguy, lwp, magnet, mag-net, markwatch, mata.?hari, memo, microsoft.?url, midown.?tool, miixpc, mirror, missigua, mrsputnik, mister.?pix, moget, mozilla.?newt, nameprotect, navroad, backdoorbot, nearsite, net.?vampire, netants, netcraft, netmechanic, netspider, nextgensearchbot, attach, nicerspro, nimblecrawler, npbot, octopus, offline.?explorer, offline.?navigator, openfind, outfoxbot, pagegrabber, papa, pavuk, pcbrowser, php.?version.?tracker, pockey, propowerbot, prowebwalker, psbot, pump, queryn, recorder, realdownload, reaper, reget, true_robot, repomonkey, rma, internetseer, sitesnagger, siphon, slysearch, smartdownload, snake, snapbot, snoopy, sogou, spacebison, spankbot, spanner, sqworm, superbot, scraper, siphon, spider, tool, superhttp, surfbot, asterias, suzuran, szukacz, takeout, teleport, telesoft, the.?intraformant, thenomad, tighttwatbot, titan, urldispatcher, turingos, turnitinbot, urly.?warning, vacuum, vci, voideye, whacker, widow, wisenutbot, wwwoffle, xaldon, xenu, zeus, zyborg, anonymouse, craftbot, download, extract, stripper, sucker, ninja, clshttp, webspider, leacher, coll?ector, grabber, webpictures, libwww-perl, aesop_com_spiderman, libwww-perl, Purebot, Sosospider, AboutUsBot, Johnny5, Python-urllib, Yeti, TurnitinBot, GoScraper, Kehalim, DoCoMo, SurveyBot, spbot, BDFetch, EasyDL, CamontSpider, Chilkat, Z?mEu, GoScraper, Kehalim, DoCoMo, SurveyBot, spbot, BDFetch, EasyDL, CamontSpider, Chilkat, Z?mEu, zip, emaile, enhancer, fetch, go.?is, auto, bandit, clip, copier, master, reaper, sauger, site.?quester, whack

What known referral spam websites are blocked by this plugin?

0n-line.tv, 1-99seo.com, 1-free-share-buttons.com, 100dollars-seo.com, 12masterov.com, 1pamm.ru, 1webmaster.ml, 24×7-server-support.site, 2your.site, 3-letter-domains.net, 4webmasters.org, 5forex.ru, 6hopping.com, 7makemoneyonline.com, 7zap.com, abc.xyz, abcdefh.xyz, abcdeg.xyz, acads.net, acunetix-referrer.com, adcash.com, adf.ly, adspart.com, adtiger.tk, adventureparkcostarica.com, adviceforum.info, advokateg.xyz, affordablewebsitesandmobileapps.com, afora.ru, akuhni.by, alfabot.xyz, alibestsale.com, allknow.info, allnews.md, allwomen.info, alpharma.net, altermix.ua, amt-k.ru, anal-acrobats.hol.es, anapa-inns.ru, android-style.com, anticrawler.org, arendakvartir.kz, arendovalka.xyz, arkkivoltti.net, artparquet.ru, aruplighting.com, autovideobroadcast.com, aviva-limoux.com, azartclub.org, azlex.uz, baixar-musicas-gratis.com, baladur.ru, balitouroffice.com, bard-real.com.ua, best-seo-offer.com, best-seo-software.xyz, best-seo-solution.com, bestmobilityscooterstoday.com, bestwebsitesawards.com, bif-ru.info, biglistofwebsites.com, billiard-classic.com.ua, biteg.xyz, bizru.info, black-friday.ga, blackhatworth.com, blogtotal.de, blue-square.biz, bluerobot.info, boltalko.xyz, boostmyppc.com, brakehawk.com, brateg.xyz, break-the-chains.com, brk-rti.ru, brothers-smaller.ru, budmavtomatika.com.ua, buketeg.xyz, bukleteg.xyz, burger-imperia.com, burn-fat.ga, buttons-for-website.com, buttons-for-your-website.com, buy-cheap-online.info, buy-cheap-pills-order-online.com, buy-forum.ru, call-of-duty.info, cardiosport.com.ua, cartechnic.ru, cenokos.ru, cenoval.ru, cezartabac.ro, chcu.net, chinese-amezon.com, ci.ua, cityadspix.com, civilwartheater.com, clicksor.com, coderstate.com, codysbbq.com, compliance-alex.xyz, compliance-alexa.xyz, compliance-andrew.xyz, compliance-brian.xyz, compliance-don.xyz, compliance-donald.xyz, compliance-elena.xyz, compliance-fred.xyz, compliance-george.xyz, compliance-irvin.xyz, compliance-ivan.xyz, compliance-john.top, compliance-julianna.top, conciergegroup.org, connectikastudio.com, cookie-law-enforcement-aa.xyz, cookie-law-enforcement-bb.xyz, cookie-law-enforcement-cc.xyz, cookie-law-enforcement-dd.xyz, cookie-law-enforcement-ee.xyz, cookie-law-enforcement-ff.xyz, cookie-law-enforcement-gg.xyz, cookie-law-enforcement-hh.xyz, cookie-law-enforcement-ii.xyz, cookie-law-enforcement-jj.xyz, cookie-law-enforcement-kk.xyz, cookie-law-enforcement-ll.xyz, cookie-law-enforcement-mm.xyz, cookie-law-enforcement-nn.xyz, cookie-law-enforcement-oo.xyz, cookie-law-enforcement-pp.xyz, cookie-law-enforcement-qq.xyz, cookie-law-enforcement-rr.xyz, cookie-law-enforcement-ss.xyz, cookie-law-enforcement-tt.xyz, cookie-law-enforcement-uu.xyz, cookie-law-enforcement-vv.xyz, cookie-law-enforcement-ww.xyz, cookie-law-enforcement-xx.xyz, cookie-law-enforcement-yy.xyz, cookie-law-enforcement-zz.xyz, copyrightclaims.org, covadhosting.biz, cubook.supernew.org, customsua.com.ua, cyber-monday.ga, dailyrank.net, darodar.com, dbutton.net, delfin-aqua.com.ua, demenageur.com, descargar-musica-gratis.net, detskie-konstruktory.ru, dipstar.org, djekxa.ru, djonwatch.ru, dktr.ru, dogsrun.net, dojki-hd.com, domain-tracker.com, dominateforex.ml, domination.ml, doska-vsem.ru, dostavka-v-krym.com, drupa.com, dvr.biz.ua, e-buyeasy.com, e-kwiaciarz.pl, easycommerce.cf, ecomp3.ru, econom.co, edakgfvwql.ru, egovaleo.it, ekatalog.xyz, ekto.ee, elmifarhangi.com, erot.co, escort-russian.com, este-line.com.ua, eu-cookie-law-enforcement2.xyz, euromasterclass.ru, europages.com.ru, eurosamodelki.ru, event-tracking.com, eyes-on-you.ga, fast-wordpress-start.com, fbdownloader.com, fix-website-errors.com, floating-share-buttons.com, for-your.website, forex-procto.ru, forsex.info, forum69.info, free-floating-buttons.com, free-share-buttons.com, free-social-buttons.com, free-social-buttons.xyz, free-social-buttons7.xyz, free-traffic.xyz, free-video-tool.com, freenode.info, freewhatsappload.com, fsalas.com, generalporn.org, germes-trans.com, get-free-social-traffic.com, get-free-traffic-now.com, get-your-social-buttons.info, getlamborghini.ga, getrichquick.ml, getrichquickly.info, ghazel.ru, ghostvisitor.com, girlporn.ru, gkvector.ru, glavprofit.ru, gobongo.info, goodprotein.ru, googlemare.com, googlsucks.com, guardlink.org, handicapvantoday.com, havepussy.com, hdmoviecamera.net, hdmoviecams.com, hongfanji.com, hosting-tracker.com, howopen.ru, howtostopreferralspam.eu, hulfingtonpost.com, humanorightswatch.org, hundejo.com, hvd-store.com, ico.re, igadgetsworld.com, igru-xbox.net, ilikevitaly.com, iloveitaly.ro, iloveitaly.ru, ilovevitaly.co, ilovevitaly.com, ilovevitaly.info, ilovevitaly.org, ilovevitaly.ru, ilovevitaly.xyz, iminent.com, imperiafilm.ru, increasewwwtraffic.info, investpamm.ru, iskalko.ru, ispaniya-costa-blanca.ru, it-max.com.ua, jjbabskoe.ru, justprofit.xyz, kabbalah-red-bracelets.com, kambasoft.com, kazrent.com, keywords-monitoring-success.com, keywords-monitoring-your-success.com, kino-fun.ru, kino-key.info, kinopolet.net, knigonosha.net, konkursov.net, law-check-two.xyz, law-enforcement-ee.xyz, law-enforcement-bot-ff.xyz, law-enforcement-check-three.xyz, law-six.xyz, laxdrills.com, legalrc.biz, littleberry.ru, livefixer.com, lsex.xyz, lumb.co, luxup.ru, magicdiet.gq, makemoneyonline.com, makeprogress.ga, manualterap.roleforum.ru, maridan.com.ua, marketland.ml, masterseek.com, mebelcomplekt.ru, mebeldekor.com.ua, med-zdorovie.com.ua, meds-online24.com, minegam.com, mirobuvi.com.ua, mirtorrent.net, mobilemedia.md, monetizationking.net, moneytop.ru, mosrif.ru, moyakuhnia.ru, muscle-factory.com.ua, myftpupload.com, myplaycity.com, net-profits.xyz, niki-mlt.ru, novosti-hi-tech.ru, nufaq.com, o-o-11-o-o.com, o-o-6-o-o.com, o-o-6-o-o.ru, o-o-8-o-o.com, o-o-8-o-o.ru, online-hit.info, online-templatestore.com, onlinetvseries.me, onlywoman.org, ooo-olni.ru, ownshop.cf, ozas.net, palvira.com.ua, petrovka-online.com, photokitchendesign.com, pizza-imperia.com, pizza-tycoon.com, popads.net, pops.foundation, pornhub-forum.ga, pornhub-forum.uni.me, pornhub-ru.com, pornoforadult.com, pornogig.com, pornoklad.ru, portnoff.od.ua, pozdravleniya-c.ru, priceg.com, pricheski-video.com, prlog.ru, producm.ru, prodvigator.ua, prointer.net.ua, promoforum.ru, pron.pro, psa48.ru, qualitymarketzone.com, quit-smoking.ga, qwesa.ru, rank-checker.online, rankings-analytics.com, ranksonic.info, ranksonic.net, ranksonic.org, rapidgator-porn.ga, rcb101.ru, rednise.com, replica-watch.ru, research.ifmo.ru, resellerclub.com, responsive-test.net, reversing.cc, rightenergysolutions.com.au, rospromtest.ru, rumamba.com, rusexy.xyz, sad-torg.com.ua, sady-urala.ru, sanjosestartups.com, santasgift.ml, savetubevideo.com, savetubevideo.info, screentoolkit.com, scripted.com, search-error.com, semalt.com, semaltmedia.com, seo-2-0.com, seo-platform.com, seo-smm.kz, seoanalyses.com, seoexperimenty.ru, seopub.net, sexsaoy.com, sexyali.com, sexyteens.hol.es, share-buttons.xyz, sharebutton.net, sharebutton.to, shop.xz618.com, sibecoprom.ru, simple-share-buttons.com, site-auditor.online, site5.com, siteripz.net, sitevaluation.org, sledstvie-veli.net, slftsdybbg.ru, slkrm.ru, slow-website.xyz, smailik.org, smartphonediscount.info, snip.to, snip.tw, soaksoak.ru, social-button.xyz, social-buttons-ii.xyz, social-buttons.com, social-traffic-1.xyz, social-traffic-2.xyz, social-traffic-3.xyz, social-traffic-4.xyz, social-traffic-5.xyz, social-traffic-7.xyz, social-widget.xyz, socialbuttons.xyz, socialseet.ru, socialtrade.biz, sohoindia.net, solitaire-game.ru, solnplast.ru, sosdepotdebilan.com, speedup-my.site, spin2016.cf, spravka130.ru, steame.ru, success-seo.com, superiends.org, supervesti.ru, taihouse.ru, tattooha.com, tedxrj.com, theguardlan.com, tomck.com, top1-seo-service.com, topquality.cf, topseoservices.co, traffic-cash.xyz, traffic2cash.org, traffic2cash.xyz, traffic2money.com, trafficgenius.xyz, trafficmonetize.org, trafficmonetizer.org, trion.od.ua, uasb.ru, unpredictable.ga, uptime.com, uptimechecker.com, uzungil.com, varikozdok.ru, vesnatehno.com, video–production.com, video-woman.com, videos-for-your-business.com, viel.su, viktoria-center.ru, vodaodessa.com, vodkoved.ru, w3javascript.com, wallpaperdesk.info, web-revenue.xyz, webmaster-traffic.com, webmonetizer.net, website-analyzer.info, website-speed-check.site, website-speed-checker.site, websites-reviews.com, websocial.me, wmasterlead.com, woman-orgasm.ru, wordpress-crew.net, wordpresscore.com, works.if.ua, ykecwqlixx.ru, youporn-forum.ga, youporn-forum.uni.me, youporn-ru.com, yourserverisdown.com, zastroyka.org, zoominfo.com, zvetki.ru

What are WordPress shortcodes?

Shortcodes in WordPress are little bits of code that allow you to do various things with little effort. They were introduced in WordPress 2.5, and the reason to introduce them was to allow people to execute code inside WordPress posts, pages, and widgets without writing any code directly. This allows you to embed files or create objects that would normally require a lot of code in just one single line. For example, a shortcode for embedding information about the user’s browser looks like this:

[tartarus_add_secure_email email=”example@email.com”]

Sometimes you may want to use the text of a shortcode in a post. To do this you have to escape it using double brackets. For example, if you want to redirect automatically users from a page, you can use tartarus_add_secure_email shortcode to add a secured version of your e-mail adress (that cannot be stolen by email stealing crawlers), by using the following shortcode:

[[tartarus_add_secure_email email=”example@email.com”]]

Shortcodes simplify the addition of features to a WordPress site. By using shortcodes the HTML and other markup is added dynamically directly into the post or page where the user wants them to appear.

Results: If everything is configures well, you can go to plugin administration page (in ‘Page Access List’ and in ‘Blocked Bots List’ subpages), and you can wait to see it’s results in the Page Access List admin page (where you can see logs of your visitors) and in the Blocked Bots List admin page (where all the banned bots will be lister)! You can also include the following shortcode to your website:

Here are some examples of Tartarus Crawl Control plugin features:

User Registration page Recaptcha integrition:

Forgot Password page Recaptcha integrition:

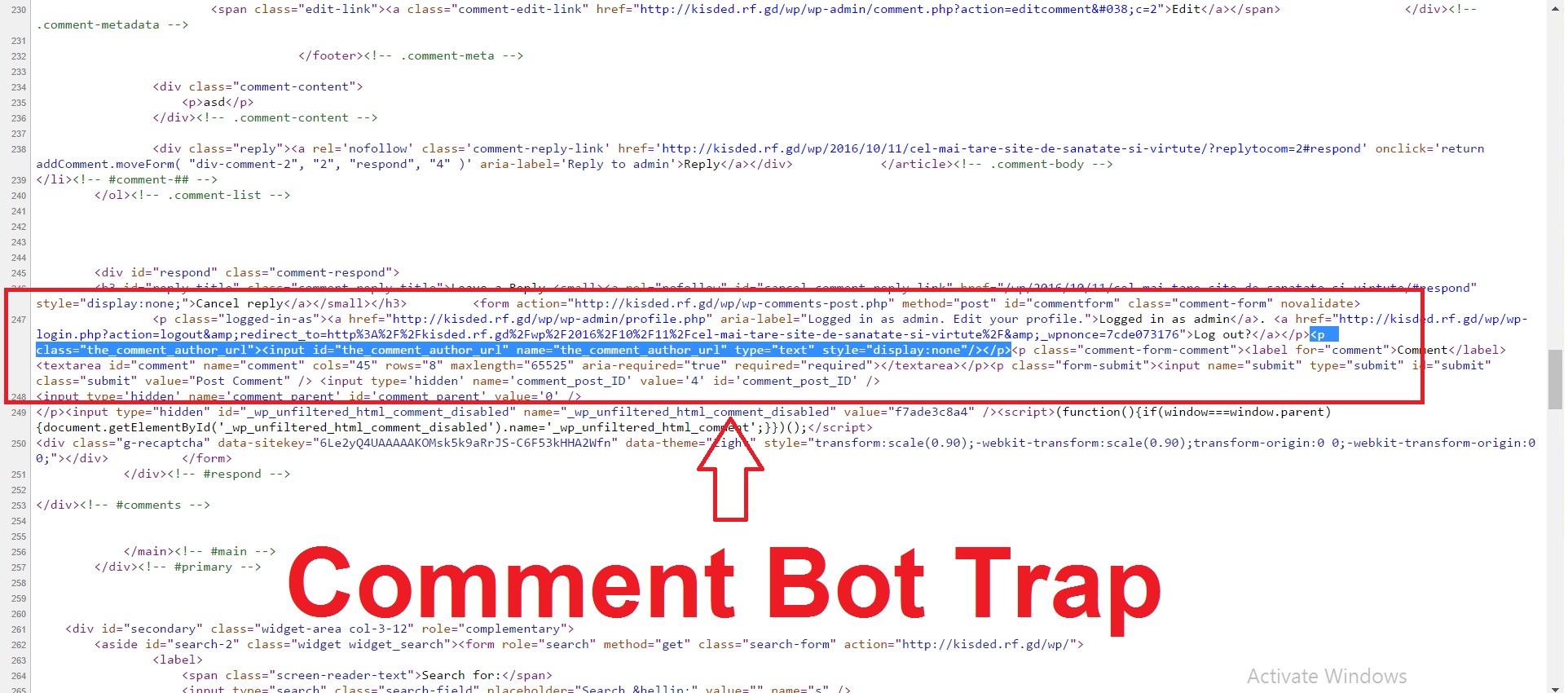

Trap link in the ‘view page source’ window for one of the blog posts (it is invisible for users, and bad bots will follow it (robots.txt has specification not to follow this link, also it has ‘nofollow’ tag), if they do, they will get banned):

The honeypot trap set up in the comment form (it is invisible for users, and bots will write in it for sure – if they do, they will be blocked):

The page access listing in the plugin settings

Example of an access denied message

Example of the ReCaptcha integrition in the login page

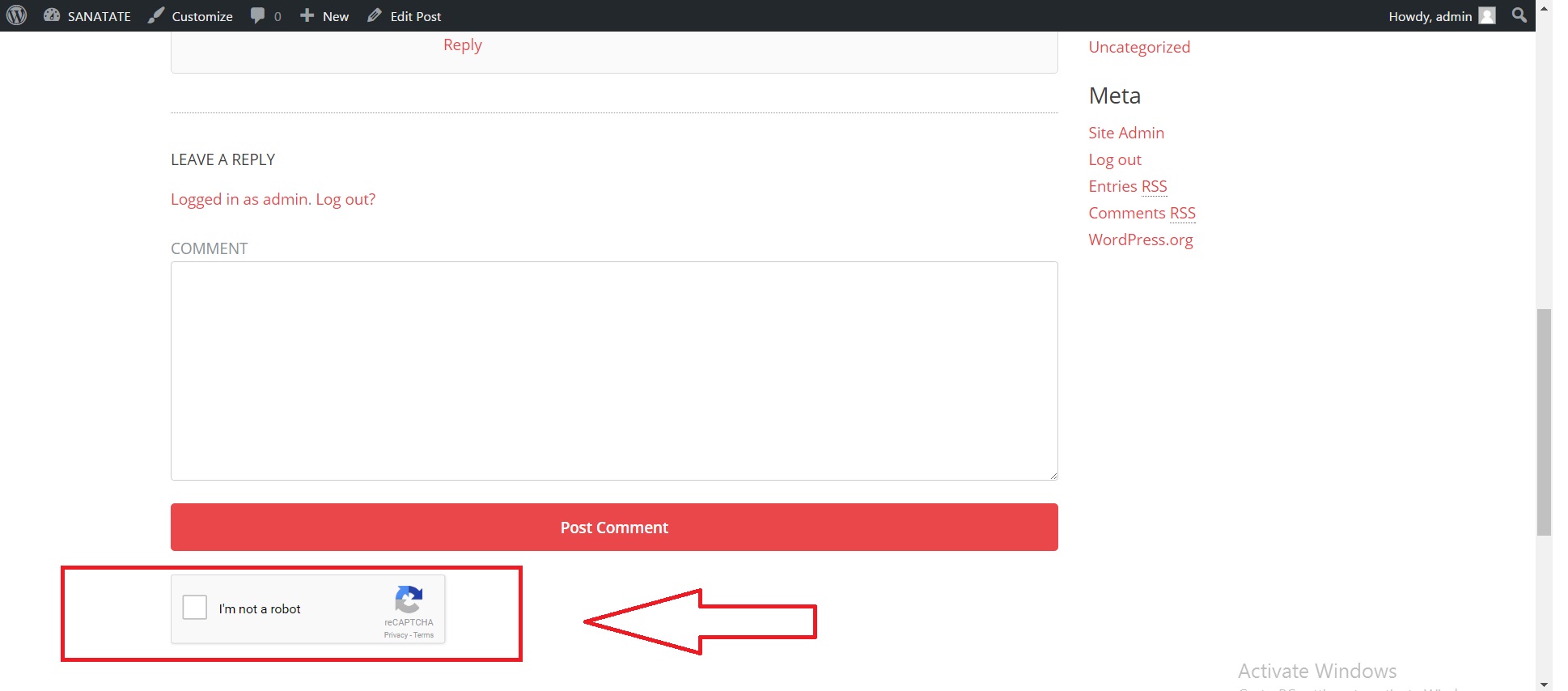

Example of the ReCaptcha integrition in the comment form

Summary

Tartarus Crawl Control plugin is a simple, yet powerful tool you can use to manage bots and spiders that crawl your website. It can protect you from the hassle caused by ‘bad bots’ trying to find vulnerabilities in your website, or to spam your comments section. The setup and settings of the plugin couldn’t have been easier. Now, let’s go and enjoy the results of this great plugin! Have fun using it!

Sources and Credits

This component was made by Szabi Kisded, for more information and support contact us at support@coderevolution.ro

Once again, thank you so much for purchasing this item. As I said at the beginning, I’d be glad to help you if you have any questions regarding this plugin and I’ll do my best to assist.

Kisded